The Transformation of Risk Regulation: Managing Uncertainty and Powers in the Digital Age

The End of the Metric: Why Europe is Turning Tech Regulation into a Constitutional Battleground

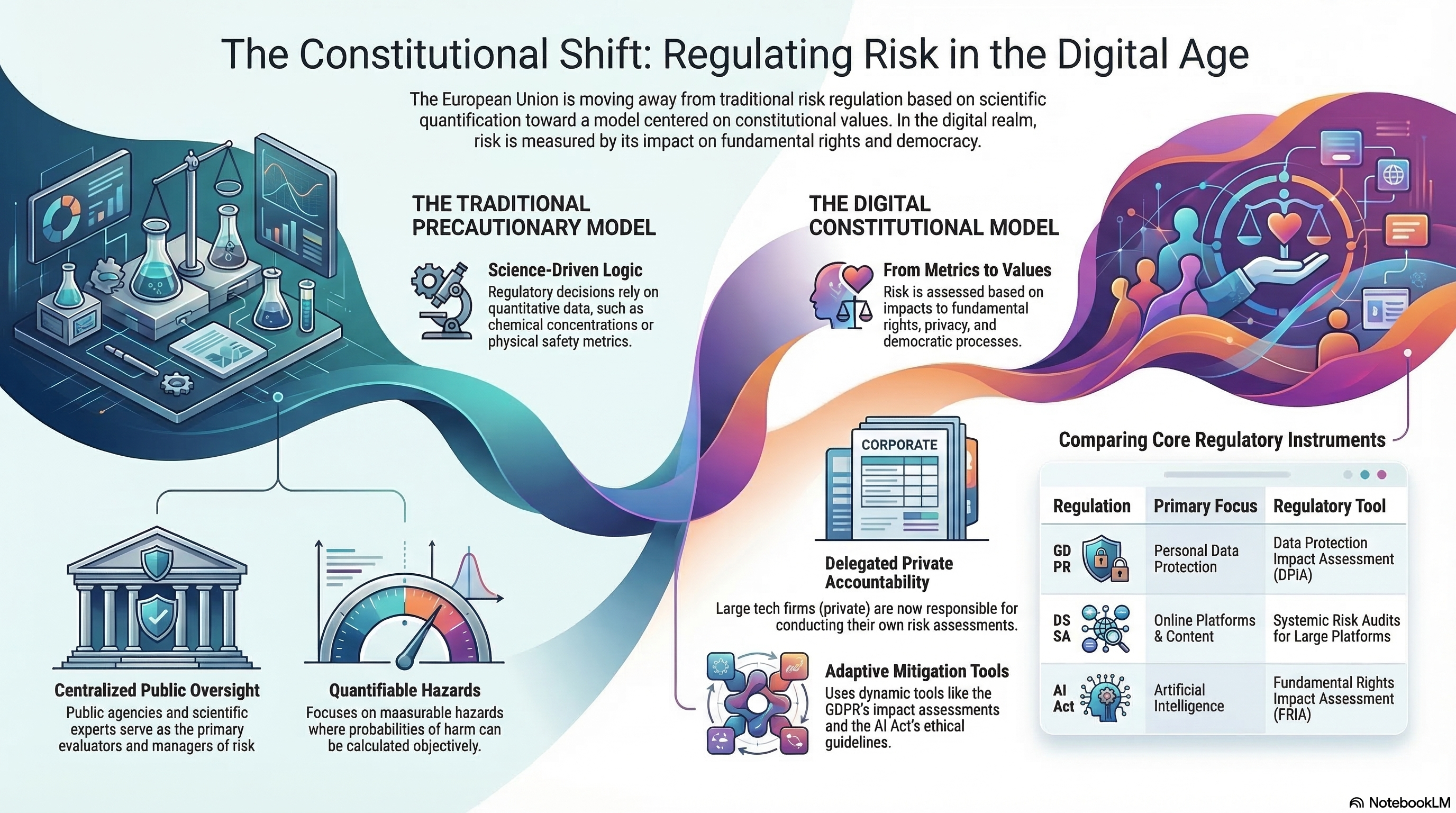

When we think about safety regulation, our minds gravitate toward the tangible: scientists in white coats measuring parts‑per‑million of a toxin in a river, or technicians using Geiger counters to find millisieverts of radiation. This is the traditional architecture of safety‑a world where threats are physical, quantifiable, and managed through hard empirical data.

But how do you measure the "toxicity" of an algorithm? How do you quantify the erosion of a democratic election or the precise "dosage" of a privacy violation? As our lives have migrated into the digital realm, the risks we face have become increasingly intangible and speculative.

Recognizing this, the European Union is executing a profound pivot. We are witnessing the birth of a "constitutional" model of regulation. Through the legislative pillars of the GDPR, the Digital Services Act (DSA), and the AI Act, the EU is moving away from purely scientific risk assessments. Instead, it is treating digital threats as "epistemological uncertainties" that must be governed by the values of fundamental rights and democracy rather than the certainties of the laboratory.

1. The Death of the Lab Report: Constitutionalism as the New Science

For decades, European risk management was anchored in the Precautionary Principle. Born from the German concept of Vorsorge (prudence and foresight) and refined during the North Sea protection conferences of the 1980s, this principle traditionally relied on "hard science" to justify state intervention in the face of uncertainty. If the data suggested a chemical might be harmful, the state acted.

In the digital sector, however, "science" is taking a backseat. Because digital risks are diffuse and context‑dependent, the EU has decided to treat constitutionalism as science. Principles like proportionality and accountability are no longer just legal footnotes; they have become the primary "metrics" used to evaluate whether a technology is safe for society.

"Risk regulation in European digital policy does not seek to rationalise uncertainty through science but to govern epistemological uncertainty through the instruments of constitutionalism, with the goal of addressing the impact of digital technologies on fundamental rights and imbalances of power."

2. The Private "Referee" and the Problem of Informational Asymmetry

In the traditional model, the state acted as the primary evaluator of risk. In the digital model, that power has been reallocated to the "regulatees" themselves. Because private firms‑the Very Large Online Platforms (VLOPs)‑own the data and the code, they have become the referees of their own impact.

This is not merely an administrative choice; it is a necessity born of informational asymmetry. Public authorities are in a "structurally reactive position" because the state simply cannot see what the platforms see. Technical authority now resides with the entities that command the datasets.

- GDPR (Data Controllers): Private entities must conduct Data Protection Impact Assessments (DPIAs) to self‑evaluate risks to "rights and freedoms."

- DSA (Online Platforms): Platforms are tasked with identifying "systemic risks" to democratic processes and public health, effectively performing the role once reserved for state intelligence and public health agencies.

3. The Myth of the Algorithmic Geiger Counter

Traditional regulation deals with quantifiable hazards. We can set a binding limit on nitrogen dioxide in the air because we can measure it. Digital regulation, however, deals with intangible harms.

You cannot use a universal meter to determine if a facial recognition system is "fair." "Fairness" is not a mathematical constant; it is a normative judgment. When the AI Act asks a company to mitigate "bias," it isn't asking for a better math equation‑it is asking for a political choice about what social justice looks like in a specific context. The EU is essentially "legalizing" uncertainty, acknowledging that while we cannot put a number on algorithmic harm, we can force a company to be legally accountable for the values it chooses to prioritize.

4. A Constitutional Reaction to "Information Capitalism"

This regulatory shift is a strategic intervention against Information Capitalism. The EU is using constitutional levers to reclaim public oversight from firms that hold a near‑monopoly on digital knowledge.

This creates a "functional duality": private tech giants are simultaneously the source of the risk and the only ones with the technical capacity to mitigate it. To manage this paradox, the state has moved toward a model of "enforced collaboration." A paradigmatic example is the Strengthened Code of Practice on Disinformation, where the European Commission doesn't just dictate rules from above, but enters into a partnership with platforms to co‑manage the "mosaic" of democratic discourse.

5. From "Banning" to "Dynamic Mitigation": Why AI Isn't Like Ibuprofen

In sectors like pharmaceuticals, the state adopts a paternalistic posture. A drug cannot hit the market until the European Medicines Agency (EMA) gives an ex‑ante "yes" based on clinical trials.

Digital regulation rejects this "gatekeeper" model in favor of ongoing, iterative mitigation.

- Self‑Regulation vs. Paternalism: Instead of an outright ban, a company may release a high‑risk AI model provided they promise to conduct a Fundamental Rights Impact Assessment (FRIA).

- Adaptive Corrections: The goal is not a one‑time "safety stamp" but a cycle of transparency reports and "content moderation tweaks." We are moving from "prior prohibition" to a world of "permanent patches," where rights‑oriented management is a never‑ending process.

Conclusion: The Future of the Algorithmic Society

The transformation of European regulation is an attempt to ensure that technology serves human rights, rather than letting "Information Capitalism" dictate the terms of our social contract. By moving from a scientific lens to a constitutional one, the EU is attempting to protect the very "mosaic" of democracy.

However, we must confront a chilling constitutional challenge: when we delegate the assessment of our fundamental rights to the very platforms that profit from their exploitation, are we giving private actors too much discretion?

The technical "know‑how" may live in Silicon Valley, but the values must live with us. Do you trust a for‑profit algorithm to be the primary guardian of your constitutional freedoms?