Identity Theft and User Cybersecurity Behaviors: A Machine Learning Framework for Predicting and Mitigating Phishing and Social Engineering Attacks Risks

The Knowledge Paradox: Why Being “Cyber‑Aware” Isn't Saving You from Identity Theft

We inhabit a digital ecosystem where the average person spends nearly seven hours a day tethered to a screen, weaving their personal and professional lives into a vast, vulnerable tapestry. As our digital footprints expand, so does the precision of the predators hunting us. Between the fourth quarter of the previous year and the second quarter of the next, the number of unique phishing sites detected surged from approximately 0.989 million to over 1.13 million. This isn't just a quantitative increase; it is an evolution. Attackers are now leveraging artificial intelligence to craft tailored lures, effectively lowering the cost of high‑volume deception while increasing its lethal accuracy.

The central irony of our era is that while global cybersecurity investment now exceeds $200 billion annually, identity theft remains one of the fastest‑growing crimes on the planet. We have built formidable technical fortresses‑firewalls, encryption, and complex protocols‑yet the "human element" remains an unpatched vulnerability. We are effectively spending billions to reinforce the walls of the castle while the inhabitants continue to hand the keys to any stranger who creates a convincing sense of urgency.

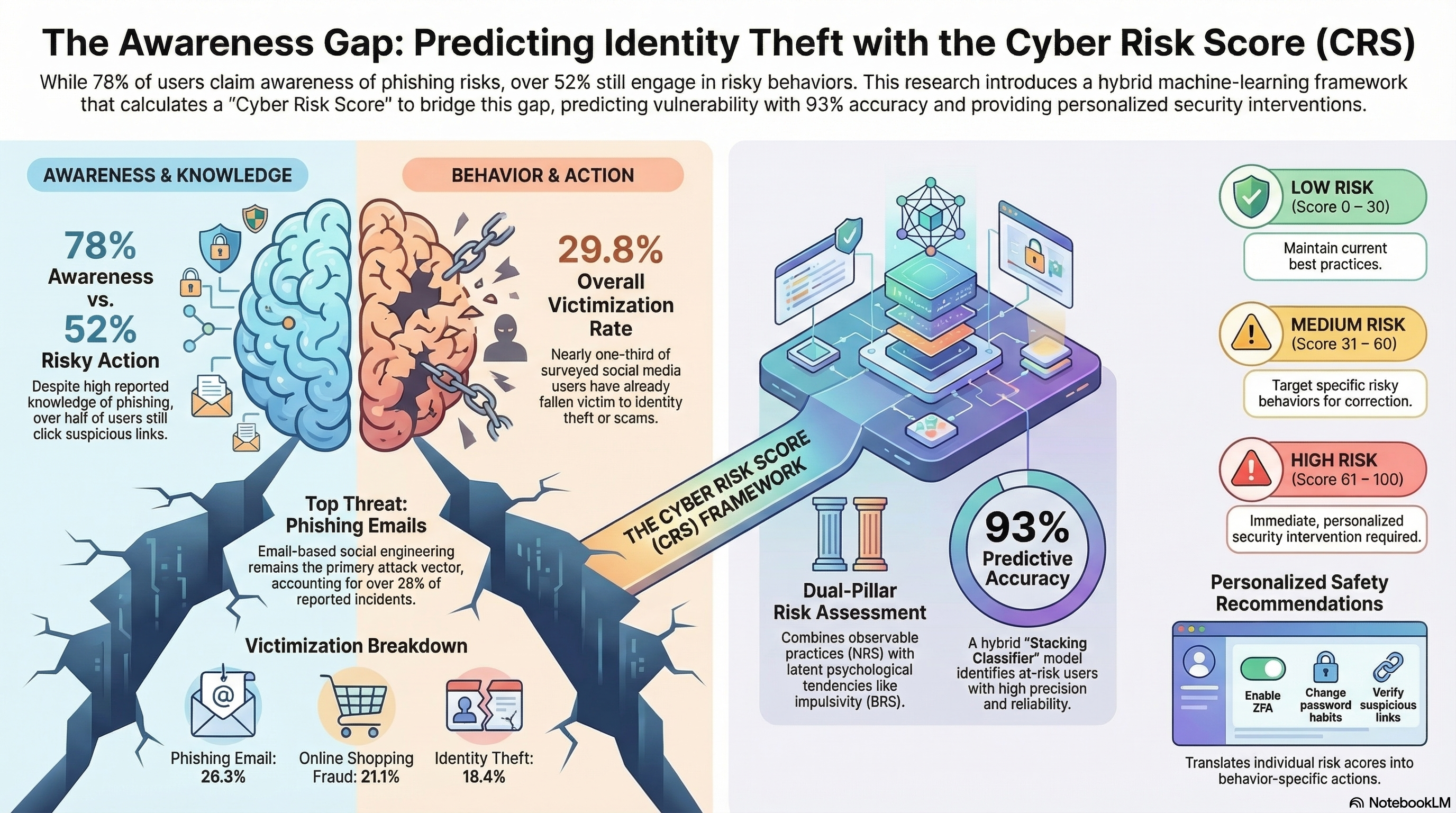

A comprehensive study involving 510 active social media users, detailed in the research paper "Identity Theft and User Cybersecurity Behaviors," illuminates the root of this failure. The data suggests that the crisis isn't a lack of knowledge, but a profound "behavioral gap." In the realm of digital ethics, we are discovering that knowing a path is dangerous and actually choosing a different one are two entirely different cognitive operations.

Takeaway 1: The 78% Awareness Trap

The most unsettling revelation from the research is the total collapse of the "awareness equals safety" doctrine. The study found that a staggering 77.8% of users are fully aware of the mechanics of phishing and social engineering. However, this cognitive acknowledgment acts as a paper shield. In practice, 52.4% of those same users admitted to clicking on suspicious or unknown links.

This disconnect suggests that "cognitive acknowledgment" is frequently overridden by "impulsive clicking." When attackers deploy false emergencies or social validation cues, they create a "latent psychological bypass" that skirts our logical defenses. We know better, yet in the heat of a digital moment, the urge for a quick response wins.

"A persistent and troubling gap separates users' knowledge from their actual behavior. Empirical evidence shows that risky user behavior is widespread in real‑world settings... knowing the risk does not reliably translate into safer actions."

Takeaway 2: Your "Cyber Risk Score" is Half‑Hidden

To diagnose why users fail, the researchers moved beyond simple checklists to create a dual‑construct measurement system. They argue that your total vulnerability is actually split into two distinct layers: the observable and the invisible.

- Normal Risk Score (NRS): This measures your "rule‑based" hygiene. It is the visible part of your defense‑whether you use two‑factor authentication (2FA), your password complexity, and your software update habits.

- Behavioral Risk Score (BRS): This represents the "invisible psychological predispositions." It captures latent tendencies like the urge to share information quickly or the tendency to trust unverified sources under pressure.

While you can see someone utilizing 2FA (NRS), you cannot see their impulsivity (BRS) until the moment of crisis. The research proves that it is these hidden mental traits‑the BRS‑that serve as the true predictors of victimization. Traditional security tips focus on the NRS, but the BRS is where the actual breach occurs.

Takeaway 3: The Surprising "Peak Risk" Age Groups

The study shatters the stereotype that only the "digitally illiterate" fall for scams. By analyzing a behavioral "Heatmap," researchers found that the highest victimization rates (32.6%) are shared equally by the 18–24 and 35–50 age groups.

The data regarding 18–24‑year‑olds‑the "digital natives"‑is particularly revealing. Comfort with technology appears to breed a dangerous overconfidence. This group showed a high engagement in risky practices, including a 0.62 proportion for using public Wi‑Fi for sensitive accounts and a 0.54 proportion for clicking suspicious links. Most tellingly, their "Unique Passwords" metric sat at a 0.54 proportion, suggesting that even though they possess high technical literacy, they choose convenience over caution, effectively leaving their digital doors unlocked.

Takeaway 4: AI is Now Better at Predicting Your Mistakes Than You Are

Where humans fail to see their own digital blind spots, a "digital jury of algorithms" succeeds. The research utilized a "Hybrid Stacking Classifier"‑a sophisticated framework that combined the strengths of three major models: Random Forest, XGBoost, and CatBoost. While CatBoost was the strongest individual contributor, the "stacking" method allowed the AI to smooth out individual model errors, resulting in a remarkable 93.14% accuracy rate in predicting potential victims.

This system doesn't just predict; it possesses a form of "algorithmic empathy" through the use of SHAP (SHapley Additive exPlanations). This allows the AI to explain why it views a user as a target. For instance, the AI identified "Share Info Quickly" as the single most dominant indicator of risk, accounting for 20.36% of the total vulnerability score. It can look at a user's behavioral data and say, "You are at risk because your impulse to validate others outweighs your caution."

Takeaway 5: The End of "One‑Size‑Fits‑All" Advice

The ultimate goal of this research is to move past generic "awareness tips" toward a "behavior‑aware dynamic recommendation framework." Generic advice like "change your password" is replaced by personalized, context‑specific interventions.

Instead of a passive pamphlet, this framework maps SHAP‑identified risk drivers to specific mitigations. For example, if the AI detects a high BRS due to impulsive clicking, it doesn't just send a reminder; it suggests browser‑level controls that delay link‑clicking, creating a "cognitive speed bump" that forces the user to move from impulse to analysis. If the risk is technical (NRS), the system prioritizes 2FA enrollment. It is a shift from educating the user to architecting their environment for safety.

Conclusion: Toward a Behavior‑First Defense

The evidence is clear: our current reliance on awareness campaigns has reached its ceiling. The future of cybersecurity will not be won solely by better firewalls, but by "microlearning interventions" and "behavior‑oriented cyber‑safety." We must stop trying to teach users to think like machines and start building machines that understand the way humans actually behave.

If an algorithm can predict your next digital mistake with 93% accuracy, are you ready to let AI manage your behavioral blind spots to keep your identity safe?