Axiomatic Foundations of Risk Measures: A Comprehensive Mathematical Treatment

Beyond the Black Swan: 5 Counter‑Intuitive Truths About Measuring Financial Risk

In 1994, the release of the RiskMetrics framework by J.P. Morgan felt like an "End of History" moment for financial engineering. It provided the industry with Value‑at‑Risk (VaR), a single, elegant number that promised to quantify the maximum potential loss of a portfolio within a given confidence interval. It was the ultimate “peace of mind” metric.

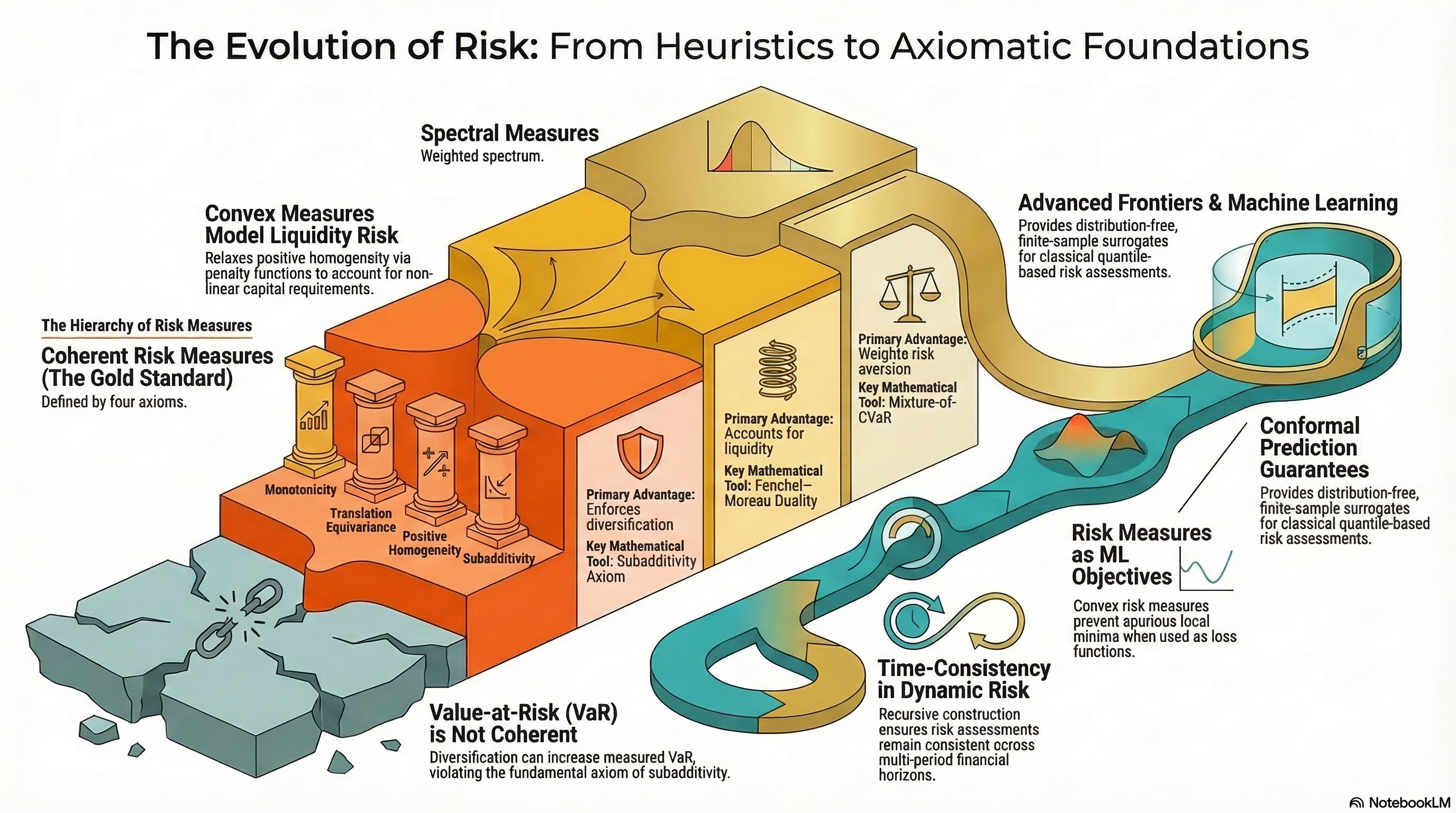

Yet, as the decades since have shown, this elegance was a mirage. We have spent thirty years refining our ability to measure a metric that is often mathematically blind to the very risks that collapse systems. The problem isn't just that our models are “wrong”‑all models are‑but that they frequently lack the essential mathematical properties required to reflect economic reality. To move beyond the simplistic “Black Swan” rhetoric, we must look toward the axiomatic foundations of risk management. By shifting from heuristic rules of thumb to rigorous representation theorems, we discover that risk measurement is less about predicting the future and more about defining the limits of our own skepticism.

1. The VaR Paradox: Why Diversification Can Make Your Risk Look Worse

The most fundamental commandment in finance is to diversify. We are taught that spreading assets across independent, idiosyncratic bets must necessarily reduce total risk. However, Value‑at‑Risk (VaR) frequently violates the mathematical property of subadditivity, leading to a scenario where the “risk” of a combined portfolio is reported as higher than the sum of its parts.

This is the “VaR Paradox.” If a risk measure does not satisfy \rho(X+Y) \leq \rho(X) + \rho(Y), it is economically incoherent. It creates a world where managers are actively penalized for de‑risking through aggregation. Consider the following scenario from the research:

“Value‑at‑Risk, while intuitive, fails to account for the magnitude of losses beyond the threshold and, critically, is not subadditive‑diversification can appear to increase measured risk. This violates the economic intuition that the merger of two risks should not create a greater risk.”

We can see this failure in a simple thought experiment. Imagine two independent “safe” bets, each with a 6% probability of a $1 loss and a 94% probability of no loss. If we measure risk at a 90% confidence level (\alpha = 0.9), the VaR for each individual bet is 0. However, if we combine them, the probability of having at least one loss is 1 - (0.94)^2 \approx 11.64\%. Since 11.64% is greater than our 10% tail, the VaR of the combined portfolio jumps to $1. In the eyes of VaR, the “diversified” portfolio is infinitely riskier than the sum of the individual bets (1 > 0+0). A tool that penalizes the fundamental act of risk‑spreading is a tool that is fundamentally broken.

2. Risk Measures are Stress Tests in Disguise

We often treat a risk measure like a thermometer‑a device that reads the “temperature” of a distribution. But the Artzner–Delbaen–Eber–Heath (ADEH) Representation (Theorem 3.9) suggests a much more proactive interpretation. It proves that every coherent risk measure is actually a supremum over a set of plausible stress scenarios.

Mathematically, a coherent risk measure is expressed as: \rho(X) = \max_{Q \in \mathcal{Q}} E_Q[‑X]

This representation forces us to admit that a risk measure is not a crystal ball, but a filter for our own skepticism. When we use a measure like Conditional Value‑at‑Risk (CVaR), we aren't just calculating an average of the tail; we are stress‑testing the portfolio against an entire family of “alternative” probability distributions.

For CVaR at level \alpha, these alternative distributions Q are defined by a specific bound on their Radon‑Nikodym density: dQ/dP \leq 1/(1‑\alpha). This is the “PhD‑tier” actionable insight: CVaR is essentially a robust optimization against any model that up‑weights the tail of your current distribution by a factor of 1/(1‑\alpha). It is the mathematical embodiment of a manager saying, “Show me the worst‑case average if my tail probabilities are actually ten times worse than I think they are.”

3. The Case for Convexity: Why Scaling Isn't Linear

For years, the gold standard of risk was “coherence,” which includes the axiom of positive homogeneity: if you double the size of your position, you double your risk. On paper, this is clean. In a real market, it is a lie.

The shift toward Convex Risk Measures (introduced by Föllmer and Schied) relaxes this linear requirement. The logic is rooted in market friction and liquidity risk. Doubling a $10 million position might be easy; doubling a $10 billion position often more than doubles the risk because the depth of the market is finite. If you are forced to exit a massive position, the slippage and price impact create a non‑linear explosion of loss.

The brilliance of convex measures lies in the Föllmer–Schied Penalty Function \alpha(Q). While coherent measures have a binary “in or out” set of stress scenarios, convex measures allow for a “budget” of skepticism. They assign a “graded cost of implausibility” to different models. This allows a risk manager to say that while certain extreme scenarios are “implausible,” they are not “impossible”‑but they will carry a mathematical penalty that increases the more they deviate from our base‑case assumptions.

4. The Time‑Consistency Trap: Your Future Self is Your Biggest Risk

A risk strategy that looks brilliant today can become a liability if it forces you into inconsistent decisions tomorrow. This is the "Time‑Consistency Trap.” A dynamic risk measure is only strongly time‑consistent if your current risk assessment aligns with your future self's assessment.

Shockingly, the industry‑standard CVaR, when applied over multiple time periods, is not time‑consistent. If you calculate your CVaR today for a two‑week horizon, and then recalculate it next week, the two assessments can contradict each other even if no new information has entered the market. This is because the “tail” of a distribution changes its shape as time passes, and CVaR doesn't naturally satisfy the recursive property: \rho_s(X) = \rho_s(‑\rho_t(X)).

To solve this, modern theory moves toward Recursive Construction. By building risk measures step‑by‑step‑where the risk today is a function of the anticipated risk tomorrow‑we ensure that our strategy remains stable. Without time‑consistency, a firm may find itself constantly oscillating between “risk‑on” and “risk‑off” stances based on the mere passage of time, effectively becoming a victim of its own modeling artifacts.

5. The Algorithmic Shift: Trading Coherence for Validity

The most provocative frontier in risk management is the move away from assuming any distribution at all. Traditional methods are “model‑based”‑they assume the market follows a specific bell curve or power law. The future is “model‑agnostic,” driven by Conformal Prediction.

Methods like Conformal Risk Control (CRC) change the fundamental trade‑off of financial engineering. In the classical axiomatic world, we demand properties like positive homogeneity. However, Conformal VaR and CRC intentionally break some of these axioms to gain something more valuable: a finite‑sample, distribution‑free guarantee.

CRC does not try to guess the “true” probability of a market crash. Instead, it uses calibration data to control the expected loss E[\ell_{n+1}(\hat{\lambda})]. It provides a rigorous bound on error that holds true as long as the data is “exchangeable” (essentially, that the future belongs to the same general regime as the past). This is the ultimate counter‑intuitive truth: to get a guarantee that actually holds up in a real‑world sample, we may have to abandon the mathematical “coherence” we spent decades perfecting. We trade the elegance of the formula for the validity of the result.

Conclusion: The Future of Risk is Axiomatic

The evolution of risk management is a journey from “feeling” to “foundation.” We are moving away from an era where we simply pick a metric because it's easy to calculate or “industry standard.” Instead, we are entering an era of Normative Foundations.

The critical question for any sophisticated risk professional is no longer “What is our VaR?” but rather: “What properties does my risk measure satisfy, and do those properties align with my economic constraints?”

Risk is not a single number to be reported; it is a set of rigorous properties we demand from our models to ensure they don't dissolve the moment the market moves. Whether you are using the dual representation to build better stress tests or leveraging conformal prediction to gain distribution‑free control, the goal is the same: building a framework that is robust enough to survive the death of its own assumptions.